What Successful AI Strategies

Actually Have in Common

Most organizations treat AI as a technology decision. The ones generating real returns treat it as a business decision first. Here is our perspective on

the four traits that separate companies producing measurable results from those stuck in pilot mode.

Not every company that invested in AI has something to show for it. Most, in fact, do not. According to an S&P Global Market Intelligence survey of over 1,000 enterprises across North America and Europe, 42 percent of companies abandoned most of their AI initiatives in 2025, up sharply from 17 percent the year before. The average organization scrapped 46 percent of its AI proofs-of-concept before they reached production.

That is not a technology problem. The models work. The cloud infrastructure is mature. The tooling has never been more accessible. What keeps failing is everything that surrounds the technology: the problem definition, the data, the organizational ownership, and the criteria for success. Fix those, and AI delivers. Leave them unaddressed, and the pilot dies in a conference room presentation with a slide that says “next steps.”

The companies generating consistent, measurable returns from AI are not the ones with the largest budgets or the most sophisticated technical teams. They are the ones who got a few fundamental things right before they bought anything. And the pattern, when you look at it clearly, is consistent across industries.

The Gap Between Adoption and Value Is Not Closing

Spending on AI is accelerating. Enterprise generative AI spending reached $13.8 billion in 2024, a six-fold increase from $2.3 billion in 2023, with most organizations planning further increases in 2025. Yet the returns on that spending remain highly concentrated. McKinsey’s 2025 State of AI survey found that 88 percent of organizations now use AI in at least one business function, but only 39 percent report any EBIT impact at the enterprise level. Most are still in the experimenting or piloting stage, with roughly one-third reporting that their companies have begun to scale their AI programs.

BCG’s research puts the number even more plainly: three-quarters of companies have yet to unlock value from AI.

The gap between adoption and value is not closing with more investment. It closes with a different approach. The organizations on the right side of that gap share four characteristics worth examining closely.

Trait 1: They defined the problem before they selected a tool

This sounds obvious. It is consistently ignored.

The typical sequence at organizations that struggle with AI goes like this: a vendor demonstrates a compelling capability, leadership approves a pilot, the pilot runs for three to six months, and the team eventually discovers that the capability does not map cleanly to the operational reality of the business. The model was not the problem. The problem was that no one clearly articulated what business question was being answered, what decision would be made differently, or what the cost of not solving it actually was.

McKinsey’s 2025 AI survey found that organizations reporting significant financial returns from AI are twice as likely to have redesigned end-to-end workflows before selecting modeling techniques. That sequence matters: workflow first, technology second. The companies that succeed start by asking what specific operational outcome they are trying to improve, who owns that outcome, and what a 20 percent improvement would be worth in dollars. The AI selection follows from those answers.

MIT research confirms this: AI’s most consistent successes are found in back-office functions such as compliance and operational support, rather than in high-visibility areas like sales and marketing. Success often comes when organizations tackle one pain point at a time, instead of pursuing broad, unfocused rollouts.

The organizations winning at AI did not start with a grand strategy. They started with a specific, bounded problem where the status quo had a quantifiable cost and a meaningful owner. That is a very different starting point than “we need an AI strategy.”

Trait 2: They treated data readiness as a prerequisite, not a parallel workstream

Informatica’s 2025 CDO Insights survey identifies data quality and readiness as the top obstacle to AI success, with 43 percent of respondents citing it. Only 12 percent of organizations report that their data is of sufficient quality and accessibility for AI applications.

This is the most consistently underestimated gap in enterprise AI programs. Organizations budget for model development, for cloud infrastructure, for licenses, and for change management. They do not budget for the upstream work of making their data fit for purpose. Then they are surprised when the model produces outputs no one trusts.

Winning programs invert the typical spending ratio, earmarking 50 to 70 percent of the timeline and budget for data readiness, including extraction, normalization, governance, quality dashboards, and retention controls. That is, before a single model is trained or a single API is called.

The practical implication is that AI readiness is, in most organizations, fundamentally a data modernization question. A company whose customer records are split across three CRMs, whose transaction data has no consistent field definitions, and whose operational data lives in department spreadsheets is not AI-ready. It is not a matter of the model’s capability. The model will faithfully reflect the quality of what it is given.

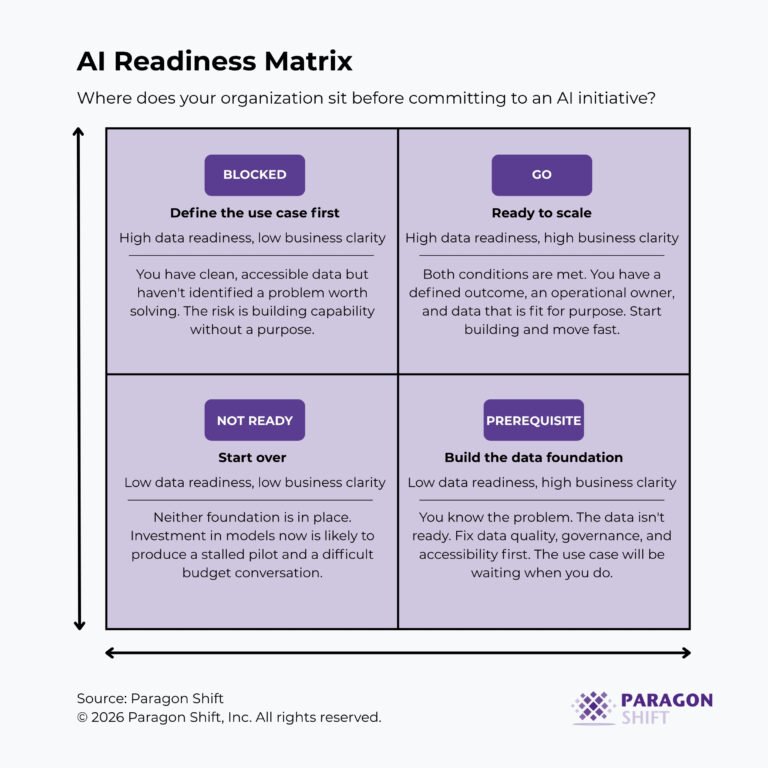

At Paragon Shift, the assessment we conduct before any AI engagement includes a structured evaluation of data quality, accessibility, and governance against the specific use case being targeted. In most organizations, that evaluation surfaces two or three data infrastructure gaps that, if left unaddressed, would predict the failure of the AI initiative before development begins. Addressing them first is not a detour. It is the work.

Trait 3: They began with a scope that was narrower than their perceived ambition.

There is real organizational pressure on AI programs to be comprehensive. Executives want platform-level transformation. Budgets get approved for enterprise-wide initiatives. The roadmap covers fifteen use cases. And then, predictably, nothing ships.

BCG’s survey data show that companies creating significant value from AI have done so by focusing on a small set of initiatives, scaling them swiftly, changing core processes, and systematically measuring operational and financial returns. AI leaders pursue, on average, only about half as many opportunities as their less advanced peers but successfully scale more than twice as many.

The distinction is precision, not ambition. A regional bank that deploys a tightly scoped document classification model in its commercial lending operation, measures the cycle time reduction, and uses that proof point to fund the next initiative is following the right sequence. A manufacturer that attempts to deploy predictive maintenance, demand forecasting, quality inspection automation, and inventory optimization simultaneously, with a shared data team and a single implementation partner, is not.

Gartner’s research makes the cost of overreach concrete: at least 30 percent of generative AI projects were abandoned after proof of concept by the end of 2025, with poor data quality, escalating costs, and unclear business value cited as the primary causes. Scope is a risk variable. Narrower scope means faster validation, cleaner data requirements, and a higher probability that someone in the business owns the outcome enough to fight for it.

The companies that now have five or six AI capabilities running in production typically started with one. That one worked, generated a return that was visible to the finance team, and earned the organizational credibility to fund the next. The path to scale runs through the first concrete win, not around it.

Trait 4: They kept business ownership in the business

BCG’s analysis of AI leaders found that the key factors for scaling AI are largely people- and process-related: change management, workflow optimization, AI talent, and governance. BCG’s recommended resource allocation is 70 percent to people and processes, 20 percent to technology and data, and 10 percent to algorithms.

Yet in most organizations, AI programs are treated as IT initiatives. A CIO or CTO owns the program. A data science team builds the models. The business units are consulted during requirements gathering and then handed a product. That structure produces technically functional AI that no one in the business uses, trusts, or maintains.

The organizations that scale AI effectively maintain clear business ownership at every stage. The use case is identified by a business leader, not surfaced by a vendor. The success criteria are defined in operational terms, not model accuracy metrics. The person accountable for the outcome is someone whose performance review includes the metric the AI is meant to move.

Gartner’s survey of organizations with high AI maturity found that in 57 percent of those organizations, business units trust and are ready to use new AI solutions. In low-maturity organizations, that figure drops to 14 percent. Trust is not a product feature. It comes from business units having been part of the process from the beginning, not presented with a finished tool at the end.

This is where most organizations need the most support: not in model selection or infrastructure design, but in building the operating model that keeps AI programs business-led rather than technology-led. At Paragon Shift, the engagements that produce durable results are those we work alongside a named business owner with a clear stake in the outcome, not delivering to an IT backlog.

What These Traits Have In Common

None of the four traits described above requires a large budget. None requires a sophisticated in-house data science team. None requires a particular cloud platform or a preferred AI vendor.

What they require is discipline: the discipline to define the problem before selecting the tool, to fix the data before training the model, to prove value in one place before expanding to fifteen, and to keep accountability for outcomes in the hands of the people who own the operations being changed.

The companies seeing the most value from AI are the ones that set growth and innovation as objectives, not just efficiency. But the path to those outcomes runs through a series of unglamorous, operational decisions that most organizations skip in the rush to deploy.

The organizations that are winning at AI are not the ones that moved fastest. They are the ones who set up the conditions for success before they started building.

Key Takeaways

1. AI failure is overwhelmingly a pre-model problem. Data quality, problem definition, and organizational ownership account for the vast majority of failed initiatives. The model is rarely the issue.

2. Organizations generating real returns pursue fewer initiatives, not more. Precision and follow-through outperform breadth every time.

3. Data readiness is a prerequisite, not a parallel workstream. An AI program built on unprepared data will fail regardless of the model’s capability.

4. Business ownership determines whether AI scales. Technology-led programs produce tools. Business-led programs produce outcomes.

5. The companies furthest along in AI did not start with the most ambitious programs. They started with the most clearly defined ones, proved value quickly, and built from there.

Conclusion

The AI programs generating real returns in 2025 look different from what most executives imagine when they picture an AI strategy. They are not enterprise-wide transformations. They are not multi-year platform replacements. They are specific, bounded, business-owned initiatives built on clean data, with clear success criteria and an operational owner who has something at stake in the result.

If your organization is somewhere between “we have done some pilots” and “we are not sure how to scale,” the gap is seldom the technology. It is the four things described above, and they are fixable.