Responsible ai in business environments:

Principles, risks, & implementation

Artificial intelligence is quickly becoming part of everyday business operations. But without the right governance and oversight, AI systems can introduce risk as easily as they create

value. Responsible AI provides the framework organizations need to scale AI confidently while maintaining trust, transparency, and accountability.

What Responsible AI Means in Business

Artificial intelligence is no longer confined to research labs or experimental innovation teams. It now influences pricing strategies, supply chain decisions, fraud detection, customer engagement, and financial forecasting.

As AI becomes embedded in core operations, the stakes increase.

Responsible AI refers to the design, deployment, and management of AI systems in ways that are transparent, accountable, and aligned with ethical and regulatory expectations.

According to EY, responsible AI focuses on ensuring that artificial intelligence systems operate fairly and safely while minimizing unintended consequences.

For business leaders, the concept becomes practical very quickly. It raises questions such as:

- Can we explain how an algorithm reached a decision?

- Are we confident that the model is using reliable data?

- Could the system unintentionally introduce bias into outcomes?

- Who is accountable when automated decisions affect customers or operations?

Responsible AI is ultimately about building trust in AI systems, internally among employees and externally with customers, partners, and regulators.

Without that trust, AI initiatives often struggle to scale beyond isolated experiments.

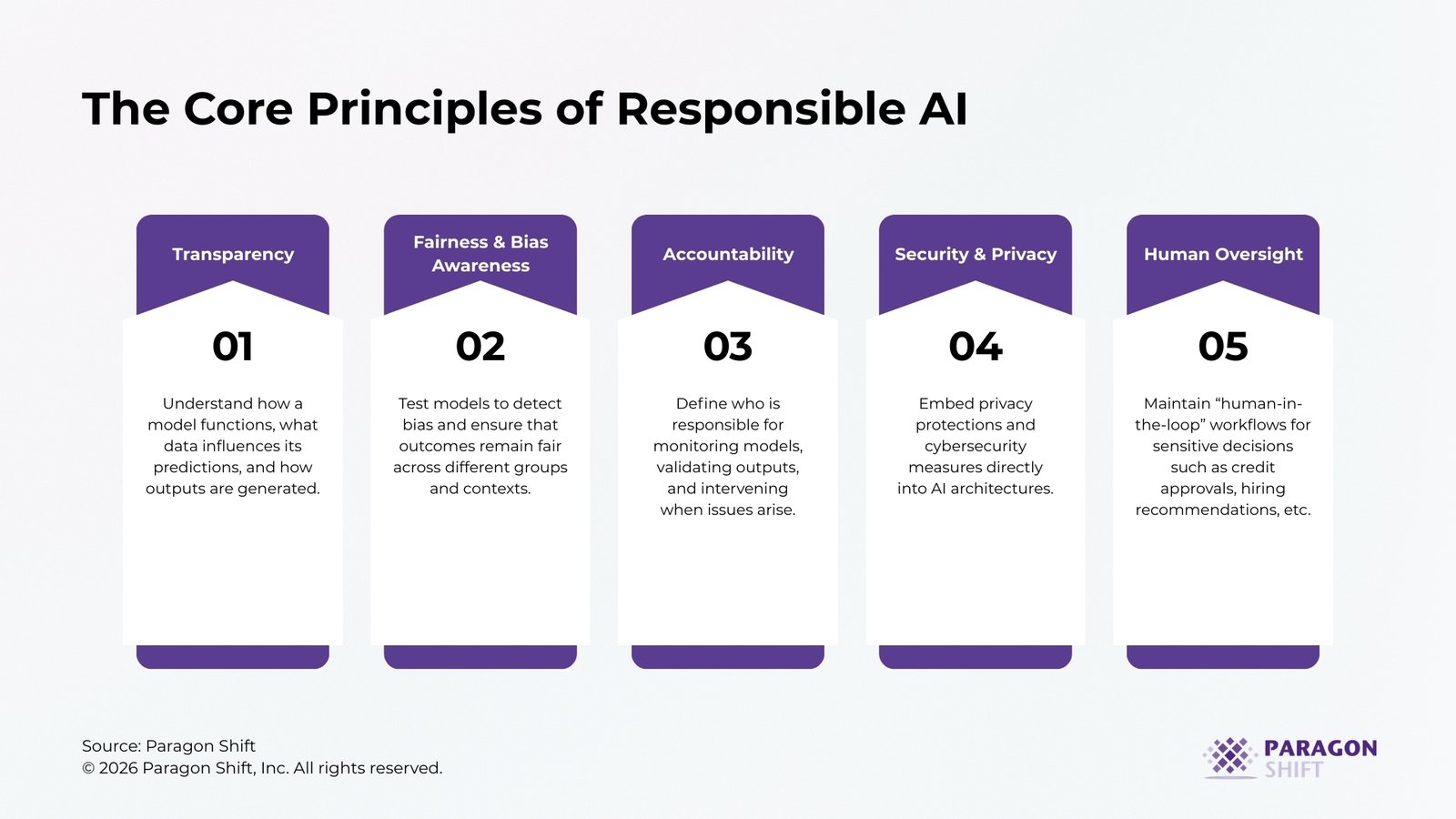

The Core Principles of Responsible AI

Although different organizations describe responsible AI in different ways, several core principles appear consistently across frameworks developed by institutions such as the World Economic Forum, PwC, and the OECD.

Transparency

AI systems should not operate as opaque “black boxes” inside an organization.

Leaders should be able to understand how a model functions, what data influences its predictions, and how outputs are generated. Transparency is particularly important when AI affects financial decisions, risk assessments, or customer interactions.

Explainability tools and model documentation are often key elements of this principle.

Fairness and Bias Awareness

AI systems learn patterns from historical data. If those datasets contain bias or incomplete representation, the model can unintentionally reproduce those patterns.

Responsible organizations actively test models to detect bias and ensure that outcomes remain fair across different groups and contexts.

For industries such as finance, healthcare, and insurance, this is becoming a regulatory and reputational priority.

Accountability

AI cannot operate without clear ownership.

Organizations must define who is responsible for monitoring models, validating outputs, and intervening when issues arise. This typically involves governance structures that combine technical teams, business leaders, and risk management functions.

Without accountability, AI systems often drift into operational blind spots.

Security and Privacy

Modern AI systems depend on large volumes of data, much of which can be sensitive.

Responsible AI frameworks require strong safeguards around how data is collected, stored, and used. Privacy protections and cybersecurity measures must be embedded directly into AI architectures.

Failure to address these issues early can expose organizations to significant legal and reputational risks.

Human Oversight

Despite rapid technological progress, AI should still operate as a decision-support tool rather than an autonomous authority in high-stakes environments.

Human oversight ensures that critical decisions can be reviewed, challenged, and corrected when necessary.

In practice, this often means maintaining “human-in-the-loop” workflows for sensitive decisions such as credit approvals, hiring recommendations, or medical analysis.

Why Responsible AI Matters for Business Leaders

Responsible AI is sometimes framed as a philosophical debate about technology ethics. In reality, it is far more practical.

Organizations that fail to address responsible AI often encounter barriers that slow down adoption and limit the value of their AI investments.

Regulatory Pressure Is Increasing

Governments around the world are introducing new frameworks for AI oversight.

These regulations increasingly require transparency in automated decision-making and stronger governance around data usage. Organizations that adopt responsible AI practices early will find it much easier to adapt as regulatory expectations evolve.

Trust Determines Adoption

Even the most sophisticated AI models fail if employees do not trust their outputs. Decision-makers must feel confident that AI-generated insights are reliable before they incorporate them into operational decisions. Responsible AI practices, especially transparency and governance, play a central role in building that confidence.

AI Failures Carry Reputational Risk

When AI systems produce biased or inaccurate outcomes, the consequences can extend far beyond technical issues.

Companies have faced public backlash when algorithms produced discriminatory outcomes or misleading recommendations. Responsible AI practices reduce the likelihood of these scenarios.

Scaling AI Requires Governance

Research from McKinsey & Company consistently shows that organizations struggle to scale AI initiatives beyond isolated pilots.

The reason is rarely the algorithm itself. More often, organizations lack the governance, alignment, and operational structures required to deploy AI across business functions responsibly.

Responsible AI frameworks provide the discipline needed to move from experimentation to enterprise adoption.

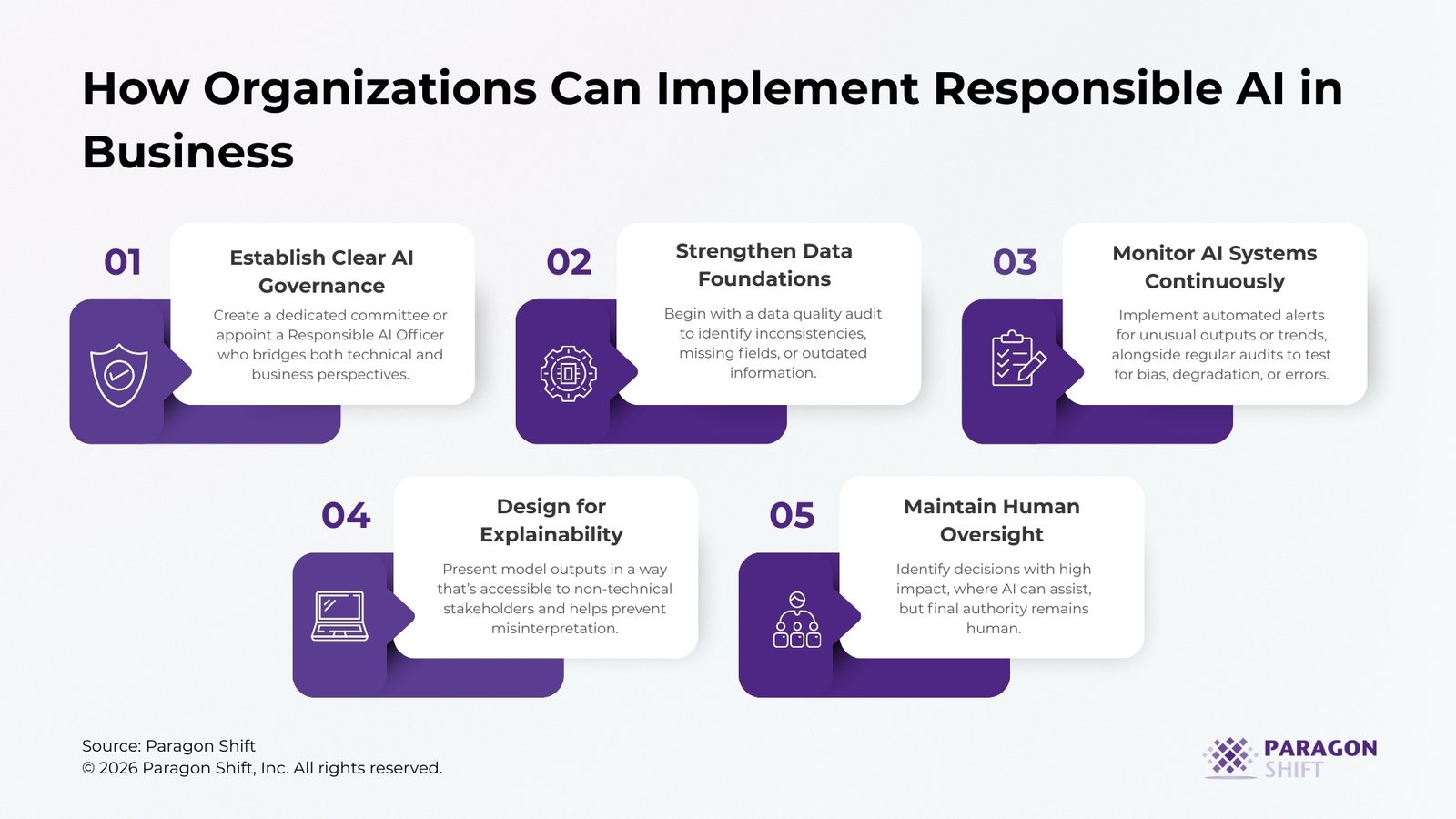

How Organizations Can Implement Responsible AI in Business

Responsible AI is not achieved through a single policy or technology platform. It requires a structured approach that combines governance, data management, and operational oversight. To make it tangible, organizations need to operationalize each element in a way that produces real business value.

Step 1: Establish Clear AI Governance

Effective AI begins with clear governance. Organizations should create a dedicated committee or appoint a Responsible AI Officer who bridges both technical and business perspectives. This team defines decision-making authority, accountability, and risk acceptance for AI initiatives, ensuring that every model deployed is aligned with the organization’s goals and regulatory obligations. For example, a financial services firm conducts quarterly executive reviews of AI-driven credit decisions, assessing outcomes against compliance and ethical standards. By embedding governance early, companies avoid the common pitfall of deploying models without oversight or accountability.

Step 2: Strengthen Data Foundations

AI outputs are only as good as the data behind them. Businesses should begin with a data quality audit to identify inconsistencies, missing fields, or outdated information. Establishing data lineage and version control ensures transparency and traceability, so teams always know where the data originates and how it has been processed. A practical way to start is by selecting a critical dataset, cleaning and standardizing it, and validating results before scaling. For instance, a healthcare provider ensures all patient records are normalized and de-duplicated before using AI to predict treatment outcomes. By prioritizing data foundations, organizations build a reliable base that supports accurate and trustworthy AI decisions.

Step 3: Monitor AI Systems Continuously

AI models are not static; they evolve as new data is introduced, and assumptions can drift over time. Continuous monitoring is essential to catch deviations early and maintain reliability. Organizations can implement automated alerts for unusual outputs or trends, alongside regular audits to test for bias, degradation, or errors. For example, an insurance company tracks its AI-driven claims scoring model weekly, allowing teams to detect shifts before they impact payouts. Consistent monitoring ensures that AI remains aligned with business goals and can adapt as conditions change.

Step 4: Design for Explainability

AI should illuminate decisions rather than obscure them. Explainable AI allows business leaders to understand the drivers behind each recommendation, supporting confidence and informed action. Using visualization tools or dashboards, companies can present model outputs in a way that’s accessible to non-technical stakeholders, and documenting assumptions and limitations helps prevent misinterpretation. For instance, a retail chain uses AI to recommend inventory allocation by store, providing managers with visibility into which sales trends and local factors influenced each recommendation. Explainability transforms AI from a black box into a trusted decision-making tool.

Step 5: Maintain Human Oversight in Critical Decisions

Even the best AI should augment human judgment, not replace it. Organizations need to identify decisions with high impact, such as loan approvals or hiring recommendations, where AI can assist, but final authority remains human. Workflows should include cross-functional review to combine AI insights with domain expertise, reducing risk while maintaining efficiency. For example, a lending institution flags borderline loan applications for human review, ensuring that qualitative factors complement algorithmic predictions. Maintaining human oversight keeps AI aligned with organizational values and ensures accountability.

Responsible AI Is Ultimately a Leadership Challenge

Many organizations treat responsible AI as a technical concern. In practice, it is just as much a leadership and governance challenge.

Executives must decide how AI aligns with company values, risk tolerance, and long-term strategy. They must also ensure that data foundations, governance structures, and operational controls are strong enough to support AI at scale. When responsible AI becomes part of the broader data strategy, organizations can innovate with far greater confidence.

Conclusion

Artificial intelligence is reshaping how businesses operate, from operational forecasting to customer engagement and strategic planning. But the value of AI depends on trust.

Responsible AI provides the guardrails that allow organizations to adopt powerful technologies without introducing unnecessary risk. By prioritizing transparency, accountability, and governance, companies can build AI systems that are both innovative and dependable.

Organizations that embed responsible AI into their data strategies today will be better prepared to scale AI initiatives tomorrow.